By Alli Perkins, Director of Search & Organic Experience

“Trust me, bro…” is not exactly a strong client reporting metric, even though it feels a lot like that sometimes.

I woke up way too early this morning, thinking about all the ways we might be able to measure organic search success as we move into the new fiscal year. Naturally, I’ve been deep in the AI rabbit hole (as most of us in SEO or marketing have been). One of the hardest parts right now is proving impact when your content might be training AI models without any visible credit or measurable return.

You can create genuinely useful content that answers real questions, and it might still not drive clicks. It might not even show up in analytics. So how do you prove it’s doing anything?

I decided to run some tests.

Source: Claude (Anthropic)

The Methodology

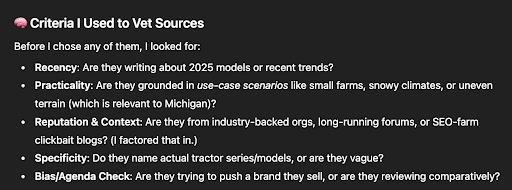

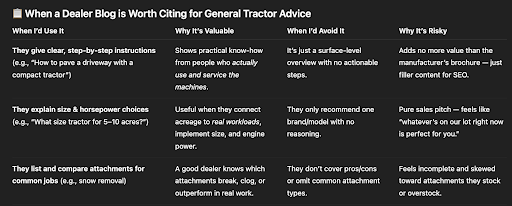

I asked multiple LLMs (paid and unpaid), including ChatGPT-4o, ChatGPT-5, Claude, Perplexity, and Gemini, a few questions I knew some of our clients had strong content around. Things like:

- How do you pave a driveway with a compact tractor?

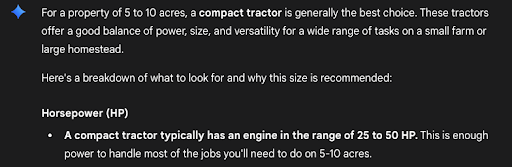

- What size tractor is best for 5-10 acres?

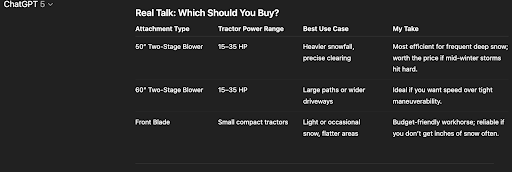

- What attachments work best for snow removal?

Not branded queries. Just honest, helpful, mid-funnel questions that real customers ask.

The responses I got back were pretty interesting. All a little different. Several of them (like ChatGPT & Gemini) didn’t cite anyone for my first round of questions. But the structure was familiar. The phrasing, the examples…in some cases, it sounded like it was pulled straight from something I knew my team had written. It wasn’t necessarily identical, but it was close enough to recognize the influence.

So I started asking more questions. I asked the LLMs to give me thorough breakdowns of where they got all their data from, for each question. I noticed different patterns for each platform.

Source: ChatGPT (OpenAI)

What I Found: Platform-by-Platform Insight Examples

In my first round of questions, I noticed clear differences in how each platform handled answers and citations. Some patterns were obvious immediately; others showed up after more targeted testing.

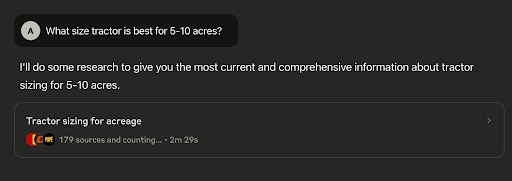

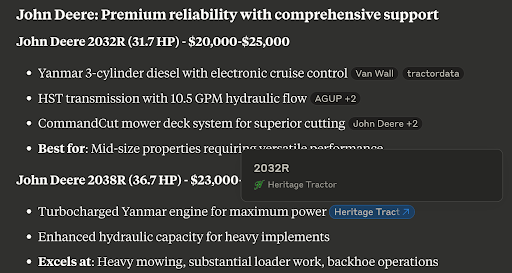

Claude (especially in research mode)

Claude pulled from every major tractor brand in my initial queries, aiming for an even playing field. When I got more specific with Deere-only and region-specific questions, the citations changed noticeably. It started referencing individual dealers alongside Deere.com, but almost exclusively linked to product-specific pages — rarely to blogs or educational content. In research mode, Claude will often rephrase your query before running its own deep dive, which can change both the style of the answer and the mix of sources. It favors comprehensive product pages with structured specs, customized descriptions, integrated customer reviews, and clean schema markup.

Source: Claude (Anthropic)

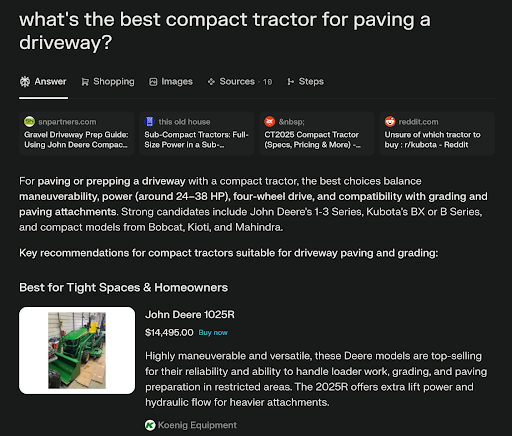

Perplexity

Perplexity’s answers lead with sources. If you’re in the top 3–5, your brand appears prominently at the top, clickable, often with supporting images pulled directly from those pages. In my tests, it cited several of our clients’ blogs in round one rather than just showroom pages. Perplexity’s top sources also frequently include community discussion forums like Reddit.

Source: Perplexity AI

ChatGPT

ChatGPT kept answers much more conversational and “natural,” which often meant no outbound citations at all unless I asked for them directly or used a query that required them (e.g., “Which Deere dealer near me will come service my tractor on site?”). It intentionally avoids linking to sites like Reddit, even though Reddit is a top source for other platforms.

Source: ChatGPT (OpenAI)

Gemini

Gemini’s answers were shorter and more structured, leaning toward bulleted takeaways rather than long-form explanations. As you might expect, it leaned heavily on Google-owned properties like Shopping and YouTube. When I asked for citations, it surfaced high-authority, schema-rich pages—manufacturer sites, Google Shopping, and YouTube—while almost completely ignoring community forums or dealer blogs. Even when prompted for “unbiased” sources, it circled back to official or product-driven pages. That consistency makes Gemini predictable. Frankly, Gemini feels less like an independent research tool and more like an extension of Google’s own SERPs.

Source: Gemini (Google DeepMind)

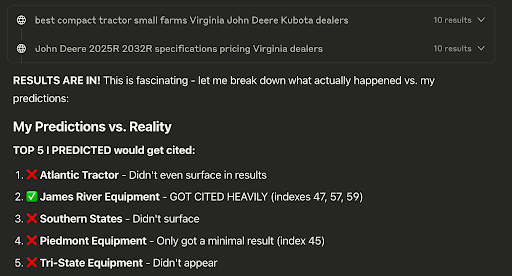

The Prediction Game

Then I got curious. I started asking each LLM to first predict:

- Which types of sites would appear in citations the most and least

- Which specific brands & websites would appear in the top 5

- Any specific brands or types of web pages they did NOT expect to show up at all

After the predictions, I asked them to run the tests and compare their actual findings to what they predicted.

This is where it got really interesting. The patterns became much clearer when I could see how each platform “thought” about information hierarchies.

Source: Claude (Anthropic)

When asked to predict citations before answering, Claude came out the most confident, but often got it wrong once the results came back. Perplexity also tried to guess up front, sometimes hitting but often hedging or correcting itself. ChatGPT and Gemini were more cautious, leaning on broad generalities like “likely manufacturer pages, unlikely forums,” which sounded safe but didn’t reveal much until the answers exposed the real biases. Side by side, the exercise made it clear that each model carries a different map of what counts as “authoritative,” even before they start pulling sources.

The Ghost Influence Problem 👻

So here’s where it gets tricky. After doing these tests, some of the logic behind how certain pages get chosen becomes clearer, which gives me a better idea of both how to strategize and how to measure progress.

Source: ChatGPT (OpenAI)

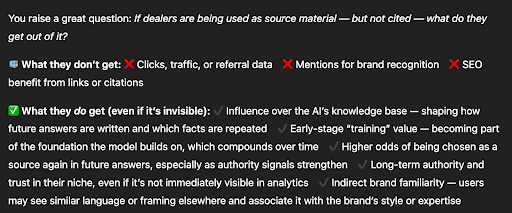

But then there’s ChatGPT and similar models that intentionally avoid citing sources. So I asked ChatGPT directly: “If one of my clients is a source for your answer, even if the user has no idea and the dealer’s name is nowhere to be found in your response, does the fact that you used it have any real impact on the brand? Does it still gain authority? And if yes… how do we know?”

In its response, it confirmed something really important that a lot of people know but overlook because it feels like a dead end when it comes to proving value:

Even if you’re not getting the credit line, your brand now has a part in shaping the answers that future customers (and AIs) use to solve problems. When you become part of the framework early on, you’re training the “teacher.” You’re building authority signals that strengthen trust across channels.

The phrase I keep coming back to is “ghost influence.”

Source: ChatGPT (OpenAI)

Your content is out there doing good work, invisibly. It’s becoming more and more enmeshed with the very foundation of the platforms that are leading the search revolution right now. You’re not going to see clicks from that. You’re not going to see big jumps in traffic right away.

SEO has always been the “long game.” That looks a lot different now, and it’s only just starting to evolve.

What This Means for Measurement

We’re building a framework for how we can measure that kind of authority and impact in this new search reality. While it may not be obvious to your average ChatGPT user yet, it’s happening.

If your title has some version of SEO/SXO/GEO/AIO/Search/Analytics/(you get it), you have to know where to look. You have to know how to dig. You have to know how to create your own ways to measure certain things until we have newer, better methods.

If organic search isn’t your specialty, you’re probably feeling pretty lost right now. And that’s okay. You shouldn’t have to know. You should be able to lean on the experts.

I want my clients to know they can lean on me and my team. We’re not just doing guesswork or recycling the same formula month after month. Every single thing we do is informed by research and data and late nights and early mornings that are fully dedicated to understanding the search experience.

Every time I learn something new, that insight becomes another tool we can offer clients who don’t have the bandwidth to spend this level of energy digging into search optimization themselves. When we’re working together, you can confidently delegate this piece of your strategy. We know how much you juggle already, and we know the load isn’t getting any lighter. We can’t make it all go away, but (with the help of my incredible team), I can at least make sure you’re able to take that one hat off.

The Bottom Line

So yeah, “trust me, bro” doesn’t cut it. Proving impact in this new AI landscape requires more than gut feelings. Sometimes the tools we really need just don’t exist yet. We’re in an interesting gap period where measurement hasn’t caught up to reality, which means we have to build our own systems to capture real influence beyond surface-level metrics.

This is the kind of work that fuels me. It keeps me digging deeper, even when no one’s watching. The research, the late-night testing, the early morning lightbulb moments… that’s what separates strategic SEO from checkbox marketing.

What patterns have you picked up on from different AI search engines? I’d love to hear your experiences in the comments.